Today I’ll explain how to setup CI/CD (Continuous Integration / Continuous Development) with Terraform and AWS CodePipeline. To do that we use the same repository as the other articles in this series:

See the full code for the Terraform infrastructure part here, and the full code for the NodeJS part here.

What are we going to cover today?

- 1. Setup Terraform (openapi-tf-example repo)

- 2. Setup NodeJS source code (openapi-node-example repo)

- 3. Conclusion

- 4. What’s next

1. Setup Terraform (openapi-tf-example repo)

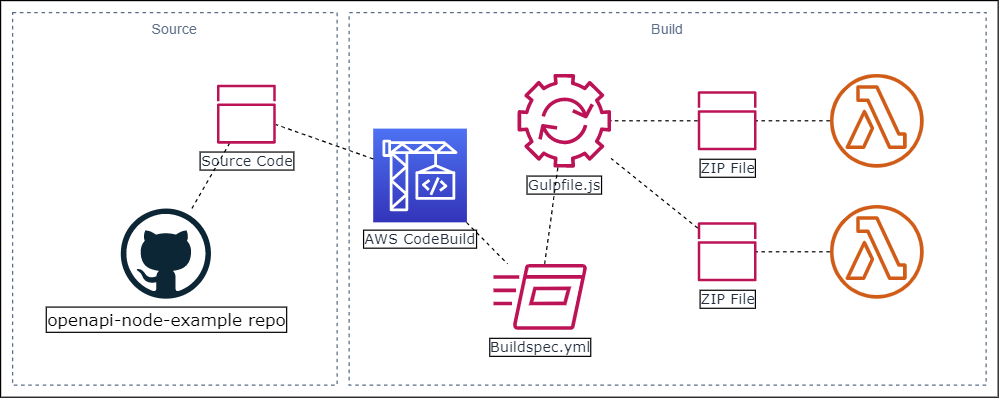

To setup our CI/CD we are going to use a service that will enable us to setup an automated pipeline for us. On AWS that is called CodePipeline, with this service we can setup any number of stages that specify how to automate our deployments.

Once we’re done with this whole setup, we can have our code deployed automatically at every commit to the Master branch of our NodeJS repository.

But first, let’s look at the CodePipeline setup we’ll be using:

Please note the two distinct halves of the solution;

- Source: This is the stage where the source code is retrieved from the designated Github repository

- Build: The stage where AWS CodeBuild retrieves the source code, and can perform actions on it within a Linux or Windows environment.

1.1 Source code

The Source code stage of the pipeline looks like this:

resource "aws_codepipeline" "_" {

name = "${local.resource_name}-codepipeline"

role_arn = module.iam_codepipeline.role_arn

artifact_store {

location = aws_s3_bucket.artifact_store.bucket

type = "S3"

}

stage {

name = "Source"

action {

name = "Source"

category = "Source"

owner = "ThirdParty"

provider = "GitHub"

version = "1"

output_artifacts = ["source"]

configuration = {

OAuthToken = var.github_token

Owner = var.github_owner

Repo = var.github_repo

Branch = var.github_branch

PollForSourceChanges = var.poll_source_changes

}

}

}

The Github repo is configured using an OAuthToken connection and the arguments PollForSourceChanges makes sure every push to the configured Branch triggers this stage of the pipeline.

The output artifacts, “source”, will be stored in this S3 bucket:

resource "aws_s3_bucket" "artifact_store" {

bucket = "${local.resource_name}-codepipeline-artifacts-${random_string.postfix.result}"

acl = "private"

force_destroy = true

lifecycle_rule {

enabled = true

expiration {

days = 5

}

}

}

We set an expiration on the bucket to clear it out every 5 days.

1.2 AWS CodeBuild

Now the second stage of the Pipeline is the AWS CodeBuild phase. This stage of the aws_codepipeline resource looks like this:

stage {

name = "Build"

action {

name = "Build"

category = "Build"

owner = "AWS"

provider = "CodeBuild"

version = "1"

input_artifacts = ["source"]

configuration = {

ProjectName = aws_codebuild_project._.name

}

}

}

This is pretty self explanatory, we get the source code from our S3 bucket defined previously and we point to the CodeBuild configuration using the ProjectName.

In the aws_codebuild_project resource we set which artifacts we have and where they come from, the environment we’re running the build in and any environment variables we need access to during the build run.

resource "aws_codebuild_project" "_" {

name = "${local.resource_name}-codebuild"

description = "${local.resource_name}_codebuild_project"

build_timeout = var.build_timeout

badge_enabled = var.badge_enabled

service_role = module.iam_codebuild.role_arn

artifacts {

type = "CODEPIPELINE"

namespace_type = "BUILD_ID"

packaging = "ZIP"

}

environment {

compute_type = var.build_compute_type

image = var.build_image

type = "LINUX_CONTAINER"

privileged_mode = var.privileged_mode

environment_variable {

name = "LAMBDA_FUNCTION_NAMES"

value = var.lambda_function_names

}

environment_variable {

name = "LAMBDA_LAYER_NAME"

value = var.lambda_layer_name

}

}

source {

type = "CODEPIPELINE"

buildspec = data.template_file.buildspec.rendered

}

}

The details of the build run are in the source buildspec file.

1.2.1 Buildspec file

In this file we get to define all the build phases and the commands, amongst other things, it needs to execute for us. For a full overview of the whole specification, look at the official documents here.

What we need is pretty simple:

version: 0.2

run-as: root

env:

variables:

LAMBDA_FUNCTION_NAMES: $LAMBDA_FUNCTION_NAMES

LAMBDA_LAYER_NAME: $LAMBDA_LAYER_NAME

phases:

install:

run-as: root

runtime-versions:

nodejs: 10

commands:

- pip3 install awscli --upgrade --user

- npm install gulp-cli -g

- npm install

build:

run-as: root

commands:

- gulp update --functions "$LAMBDA_FUNCTION_NAMES" --lambdaLayer "$LAMBDA_LAYER_NAME"

post_build:

commands:

- echo " Completed Lambda & Lambda Layer updates ... "

- echo Update completed on `date`

artifacts:

name: update-$(date +%Y-%m-%d)

We need an up to date AWS CLI tool to update our Lambda and Lambda layer code, and then it needs to run our custom Gulp script:

gulp update --functions "$LAMBDA_FUNCTION_NAMES" --lambdaLayer "$LAMBDA_LAYER_NAME"

This script updates the code and configuration in all the functions specified in the LAMBDA_FUNCTION_NAMES environment variable, as well as the Lambda-layer in LAMBDA_LAYER_NAME environment variable.

1.3 Permissions

Both AWS CodePipeline and CodeBuild need permissions to run operations on AWS services.

For CodePipeline we need the following permissions set (see file ./policies/codepipeline-policy.json):

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"s3:GetObject",

"s3:GetObjectVersion",

"s3:GetBucketVersioning",

"s3:List*",

"s3:PutObject"

],

"Resource": [

"${s3_bucket_arn}",

"${s3_bucket_arn}/*"

]

},

{

"Effect": "Allow",

"Action": [

"codebuild:BatchGetBuilds",

"codebuild:StartBuild"

],

"Resource": "${codebuild_project_arn}"

}

]

}

CodePipeline needs access to S3, to deploy the source artifacts and it needs to start the CodeBuild process.

In order for this CodeBuild phase to run properly, it needs permissions to access AWS S3, and to modify AWS Lambda and Lambda layer configurations. To that end we add the following policy from the file ./policies/codebuild-policy.json:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Resource": [

"*"

],

"Action": [

"logs:CreateLogGroup",

"logs:CreateLogStream",

"logs:PutLogEvents",

"lambda:GetLayerVersion",

"lambda:PublishLayerVersion",

"lambda:PublishVersion",

"lambda:UpdateAlias",

"lambda:UpdateFunctionCode",

"lambda:UpdateFunctionConfiguration",

"lambda:ListFunctions"

]

},

{

"Effect":"Allow",

"Action": [

"s3:GetObject",

"s3:GetObjectVersion",

"s3:GetBucketVersioning",

"s3:List*",

"s3:PutObject"

],

"Resource": [

"${s3_bucket_arn}",

"${s3_bucket_arn}/*"

]

}

]

}

An improvement here would be to specify exactly which AWS Lambda resources the Lambda/Log policies apply to.

2. Setup NodeJS source code (openapi-node-example repo)

The Terraform side of things has been set up, now for the CodeBuild phase to work, it needs the Gulp script to execute Lambda and Lambda-layer update statements.

2.1 Gulp file

Below is the core of the Gulp script.

const options = minimist(process.argv.slice(2))

const lambdaLayer = options.lambdaLayer

const arrFunctions = options.functions.split(',')

At the top part we need to get the CLI parameters using minimist

var lambdaLayerJSON = {}

gulp.task('taskLambdaLayer', function (done) {

const output = shellJS.exec('aws lambda publish-layer-version --layer-name ' + lambdaLayer + ' --zip-file fileb://' + projectName + '/' + zipLayerFilename)

lambdaLayerJSON = JSON.parse(output)

done()

})

Then we need to publish the new Lambda layer code first before we update the Lambda’s. This function captures the output of this shell execution and JSON parses this into a file scoped variable. We need the new Lambda layer version from the output.

arrFunctions.forEach(function (lambda) {

gulp.task('update_' + lambda, gulp.series(

function updateCode (done) {

shellJS.exec('aws lambda update-function-code --function-name ' + lambda + ' --zip-file fileb://' + projectName + '/' + zipFileName)

done()

},

function updateConfig (done) {

shellJS.exec('aws lambda update-function-configuration --function-name ' + lambda + ' --layers ' + lambdaLayerJSON.LayerVersionArn)

done()

}

))

})

gulp.task('lambdas',

gulp.parallel(

arrFunctions.map(function (name) { return 'update_' + name })

)

)

Finally, we update the function Code with function updateCode and then we update the functions configuration to update the Lambda layer version in function updateConfig . Here we use the file scoped lambdaLayerJSON that stores the latest Lambda layer version ARN

3. Conclusion

All the parts of the solution are there, the only thing left is to integrate this into our openapi-tf-example repo and we’re done!

module "cicd" {

source = "github.com/rpstreef/tf-cicd-lambda?ref=v1.0"

resource_tag_name = var.resource_tag_name

namespace = var.namespace

region = var.region

github_token = var.github_token

github_owner = var.github_owner

github_repo = var.github_repo

poll_source_changes = var.poll_source_changes

lambda_layer_name = aws_lambda_layer_version._.layer_name

lambda_function_names = "${module.identity.lambda_name},${module.user.lambda_names}"

}

The last two variables contain all our Lambda and Lambda layer function names that need to be updated once the code in the openapi-node-example repo changes.

4. What’s next

With that you can quickly setup a CI/CD that works for your Serverless Lambda/Lambda-layer environment. Checkout my dedicated CI/CD repo for Serverless here.

If you have any questions about the setup, comments, or improvement suggestions? Do comment and share!

Thanks for reading and till next week!